-

Trying to set up LLama but i get a error saying CUDA has no path set

Hello y'all, i was using this guide to try and set up llama again on my machine, i was sure that i was following the instructions to the letter but when i get to the part where i need to run setup_cuda.py install i get this error

File "C:\Users\Mike\miniconda3\Lib\site-packages\torch\utils\cpp_extension.py", line 2419, in _join_cuda_home raise OSError('CUDA_HOME environment variable is not set. ' OSError: CUDA_HOME environment variable is not set. Please set it to your CUDA install root. (base) PS C:\Users\Mike\text-generation-webui\repositories\GPTQ-for-LLaMa>i'm not a huge coder yet so i tried to use setx to set CUDA_HOME to a few different places but each time doing

echo %CUDA_HOMEdoesn't come up with the address so i assume it failed, and i still can't run setup_cuda.pyAnyone have any idea what i'm doing wrong?

-

A font with an LLM embedded

You type "Once upon a time!!!!!!!!!!" and those exclamation marks are rendered to show the LLM generated text, using a tiny 30MB model

via https://simonwillison.net/2024/Jun/23/llama-ttf/

- ai.meta.com Sharing new research, models, and datasets from Meta FAIR

Meta FAIR is releasing several new research artifacts. Our hope is that the research community can use them to innovate, explore, and discover new ways to apply AI at scale.

- www.wired.com Publishers Target Common Crawl In Fight Over AI Training Data

Long-running nonprofit Common Crawl has been a boon to researchers for years. But now its role in AI training data has triggered backlash from publishers.

-

[Question] Why is there no Q8 quantization for Phi-3-V?

Hello! I am looking for some expertise from you. I have a hobby project where Phi-3-vision fits perfectly. However, the PyTorch version is a little too big for my 8GB video card. I tried looking for a quantized model, but all I found is 4-bit. Unfortunately, this model works too poorly for me. So, for the first time, I came across the task of quantizing a model myself. I found some guides for Phi-3V quantization for ONNX. However, the only options are fp32(?), fp16, int4. Then, I found a nice tool for AutoGPTQ but couldn't make it work for the job yet. Does anybody know why there is no int8/int6 quantization for Phi-3-vision? Also, has anybody used AutoGPTQ for quantization of vision models?

-

[Paper] Alice in Wonderland: Simple Tasks Showing Complete Reasoning Breakdown in SOTA Large Language Models

"Alice has N brothers and she also has M sisters. How many sisters does Alice’s brother have?"

The problem has a light quiz style and is arguably no challenge for most adult humans and probably to some children.

The scientists posed varying versions of this simple problem to various State-Of-the-Art LLMs that claim strong reasoning capabilities. (GPT-3.5/4/4o , Claude 3 Opus, Gemini, Llama 2/3, Mistral and Mixtral, including very recent Dbrx and Command R+)

They observed a strong collapse of reasoning and inability to answer the simple question as formulated above across most of the tested models, despite claimed strong reasoning capabilities. Notable exceptions are Claude 3 Opus and GPT-4 that occasionally manage to provide correct responses.

This breakdown can be considered to be dramatic not only because it happens on such a seemingly simple problem, but also because models tend to express strong overconfidence in reporting their wrong solutions as correct, while often providing confabulations to additionally explain the provided final answer, mimicking reasoning-like tone but containing nonsensical arguments as backup for the equally nonsensical, wrong final answers.

-

Does anyone know where the old original GPT-2 (transformer) model ended up?

Remember 2-3 years ago when OpenAI had a website called transformer that would complete a sentence to write a bunch of text. Most of it was incoherent but I think it is important for historic and humor purposes.

-

Looking for upgrade advice. Anyone building their supercomputer out of 3060s?

[!](https://discuss.tchncs.de/pictrs/image/9ed3d21c-4511-40ef-8a41-3c6c313c2d02.jpeg) So here's the way I see it; with Data Center profits being the way they are, I don't think Nvidia's going to do us any favors with GPU pricing next generation. And apparently, the new rule is Nvidia cards exist to bring AMD prices up.

So here's my plan. Starting with my current system;

OS: Linux Mint 21.2 x86_64 CPU: AMD Ryzen 7 5700G with Radeon Graphics (16) @ 4.673GHz GPU: NVIDIA GeForce RTX 3060 Lite Hash Rate GPU: AMD ATI 0b:00.0 Cezanne GPU: NVIDIA GeForce GTX 1080 Ti Memory: 4646MiB / 31374MiBI think I'm better off just buying another 3060 or maybe 4060ti/16. To be nitpicky, I can get 3 3060s for the price of 2 4060tis and get more VRAM plus wider memory bus. The 4060ti is probably better in the long run, it's just so damn expensive for what you're actually getting. The 3060 really is the working man's compute card. It needs to be on an all-time-greats list.

My limitations are that I don't have room for full-length cards (a 1080ti, at 267mm, just barely fits), also I don't want the cursed power connector. Also, I don't really want to buy used because I've lost all faith in humanity and trust in my fellow man, but I realize that's more of a "me" problem.

Plus, I'm sure that used P40s and P100s are a great value as far as VRAM goes, but how long are they going to last? I've been using GPGPU since the early days of LuxRender OpenCL and Daz Studio Iray, so I know that sinking feeling when older CUDA versions get dropped from support and my GPU becomes a paperweight. Maxwell is already deprecated, so Pascal's days are definitely numbered.

On the CPU side, I'm upgrading to whatever they announce for Ryzen 9000 and a ton of RAM. Hopefully they have some models without NPUs, I don't think I'll need them. As far as what I'm running, it's Ollama and Oobabooga, mostly models 32Gb and lower. My goal is to run Mixtral 8x22b but I'll probably have to run it at a lower quant, maybe one of the 40 or 50Gb versions.

My budget: Less than Threadripper level.

Thanks for listening to my insane ramblings. Any thoughts?

-

Did you know you could ask your AI for driving directions?

It actually isn't half bad depending on the model. It will not be able to help you with side streets but you can ask for the best route from Texas to Alabama or similar. The results may surprise you.

-

Best Upgrade Path for my Desktop

Current situation: I've got a desktop with 16 GB of DDR4 RAM, a 1st gen Ryzen CPU from 2017, and an AMD RX 6800 XT GPU with 16 GB VRAM. I can 7 - 13b models extremely quickly using ollama with ROCm (19+ tokens/sec). I can run Beyonder 4x7b Q6 at around 3 tokens/second.

I want to get to a point where I can run Mixtral 8x7b at Q4 quant at an acceptable token speed (5+/sec). I can run Mixtral Q3 quant at about 2 to 3 tokens per second. Q4 takes an hour to load, and assuming I don't run out of memory, it also runs at about 2 tokens per second.

What's the easiest/cheapest way to get my system to be able to run the higher quants of Mixtral effectively? I know that I need more RAM Another 16 GB should help. Should I upgrade the CPU?

As an aside, I also have an older Nvidia GTX 970 lying around that I might be able to stick in the machine. Not sure if ollama can split across different brand GPUs yet, but I know this capability is in llama.cpp now.

Thanks for any pointers!

-

I'm I the only one blown away by AI?

Recently OpenAI released GPT-4o

Video I found explaining it: https://youtu.be/gy6qZqHz0EI

Its a little creepy sometimes but the voice inflection is kind of wild. What I the to be alive.

-

What is your average token usage (inference) pr day with your particular workflow ?

I am planning my first ai-lab setup, and was wondering how many tokens different AI-workflows/agent network eat up on an average day. For instance talking to an AI all day, have devlin running 24/7 or whatever local agent workflow is running.

Oc model inference speed and type of workflow influences most of these networks, so perhaps it's easier to define number of token pr project/result ?

So I were curious about what typical AI-workflow lemmies here run, and how many tokens that roughly implies on average, or on a project level scale ? Atmo I don't even dare to guess.

Thanks..

- spectrum.ieee.org Llama 3 Establishes Meta as the Leader in “Open” AI

Meta’s new AI model was trained on seven times as much data as its predecessor

-

Eric Hartford on X: "I am super excited to announce that I've accepted a position with @TensorWaveCloud - focused on training AI models with @AMDInstinct technologies!"

Hartford is credited as creator of Dolphin-Mistral, Dolphin-Mixtral and lots of other stuff.

He's done a huge amount of work on uncensored models.

- www.itpro.com Meta's Llama 3 will force OpenAI and other AI giants to up their game

The new model pushes open source as a serious contender in the AI space, and proprietary models might soon find themselves playing catch-up

- www.yahoo.com Meta releases Llama 3, claims it's among the best open models available

Meta has released the latest entry in its Llama series of open generative AI models: Llama 3. Or, more accurately, the company has debuted two models in its new Llama 3 family, with the rest to come at an unspecified future date. Meta describes the new models -- Llama 3 8B, which contains 8 billio...

-

New Mistral model is out

From Simon Willison: "Mistral tweet a link to a 281GB magnet BitTorrent of Mixtral 8x22B—their latest openly licensed model release, significantly larger than their previous best open model Mixtral 8x7B. I’ve not seen anyone get this running yet but it’s likely to perform extremely well, given how good the original Mixtral was."

-

Meta confirms that its Llama 3 open source LLM is coming in the next month

techcrunch.com Meta confirms that its Llama 3 open source LLM is coming in the next month | TechCrunchMeta's Llama families, built as open-source products, represent a different philosophical approach to how AI should develop as a wider technology.

- justine.lol LLaMA Now Goes Faster on CPUs

I wrote 84 new matmul kernels to improve llamafile CPU performance.

-

What's the current recommendation for an anime oriented model?

I've been using tie-fighter which hasn't been too bad with lorebooks in tavern.

-

Devika is an Agentic AI Software Engineer that can understand high-level human instructions, break them down into steps, research relevant information, and write code to ach

github.com GitHub - stitionai/devika: Devika is an Agentic AI Software Engineer that can understand high-level human instructions, break them down into steps, research relevant information, and write code to achieve the given objective. Devika aims to be a competitive open-source alternative to Devin by Cognition AI.Devika is an Agentic AI Software Engineer that can understand high-level human instructions, break them down into steps, research relevant information, and write code to achieve the given objective...

-

Dock GPU to Laptop or to small SOC?

Afaik most LLMs run purely on the GPU, dont they?

So if I have an Nvidia Titan X with 12GB of RAM, could I plug this into my laptop and offload the load?

I am using Fedora, so getting the NVIDIA drivers would be... fun and already probably a dealbreaker (wouldnt want to run proprietary drivers on my daily system).

I know that using ExpressPort adapters people where able to use GPUs externally, and this is possible with thunderbolt too, isnt it?

The question is, how well does this work?

Or would using a small SOC to host a webserver for the interface and do all the computing on the GPU make more sense?

I am curious about the difficulties here, ARM SOC and proprietary drivers? Laptop over USB-c (maybe not thunderbolt?) and a GPU just for the AI tasks...

- useanything.com AnythingLLM | The ultimate AI business intelligence tool

AnythingLLM is the ultimate enterprise-ready business intelligence tool made for your organization. With unlimited control for your LLM, multi-user support, internal and external facing tooling, and 100% privacy-focused.

Linux package available like LM Studio

-

Open web UI - a web UI primarily for ollama that has a bunch of useful functionally

github.com 🏡 Home | Open WebUIOpen WebUI is an extensible, feature-rich, and user-friendly self-hosted WebUI designed to operate entirely offline. It supports various LLM runners, including Ollama and OpenAI-compatible APIs.

-

Evolving New Foundation Models: Unleashing the Power of Automating Model Development

arXiv: https://arxiv.org/abs/2403.13187 \[cs.NE\]\ GitHub: https://github.com/SakanaAI/evolutionary-model-merge

- huggingface.co GaLore: Advancing Large Model Training on Consumer-grade Hardware

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

arXiv: https://arxiv.org/abs/2403.03507 [cs.LG]

-

Mistral 7B v0.2 Base (released at SHACK15sf hackathon)

GitHub: https://github.com/mistralai-sf24/hackathon \ X: https://twitter.com/MistralAILabs/status/1771670765521281370 >New release: Mistral 7B v0.2 Base (Raw pretrained model used to train Mistral-7B-Instruct-v0.2)\ >🔸 https://models.mistralcdn.com/mistral-7b-v0-2/mistral-7B-v0.2.tar \ >🔸 32k context window\ >🔸 Rope Theta = 1e6\ >🔸 No sliding window\ >🔸 How to fine-tune:

-

Ollama now supports AMD graphics cards

ollama.com Ollama now supports AMD graphics cards · Ollama BlogOllama now supports AMD graphics cards in preview on Windows and Linux. All the features of Ollama can now be accelerated by AMD graphics cards on Ollama for Linux and Windows.

But in all fairness, it's really llama.cpp that supports AMD.

Now looking forward to the Vulkan support!

-

T-Ragx - Enhancing Translation with RAG-Powered LLMs

github.com GitHub - rayliuca/T-Ragx: Enhancing Translation with RAG-Powered Large Language ModelsEnhancing Translation with RAG-Powered Large Language Models - rayliuca/T-Ragx

Excited to share my T-Ragx project! And here are some additional learnings for me that might be interesting to some:

- vector databases aren't always the best option

- Elasticsearch or custom retrieval methods might work even better in some cases

- LoRA is incredibly powerful for in-task applications

- The pace of the LLM scene is astonishing

TowerInstructandALMA-Rtranslation LLMs launched while my project was underway

- Above all, it was so fun!

Please let me know what you think!

- vector databases aren't always the best option

-

My personal collection of interesting models I've quantized from the past week (yes, just week)

So you don't have to click the link, here's the full text including links:

>Some of my favourite @huggingface models I've quantized in the last week (as always, original models are linked in my repo so you can check out any recent changes or documentation!): > >@shishirpatil_ gave us gorilla's openfunctions-v2, a great followup to their initial models: https://huggingface.co/bartowski/gorilla-openfunctions-v2-exl2 > >@fanqiwan released FuseLLM-VaRM, a fusion of 3 architectures and scales: https://huggingface.co/bartowski/FuseChat-7B-VaRM-exl2 > >@IBM used a new method called LAB (Large-scale Alignment for chatBots) for our first interesting 13B tune in awhile: https://huggingface.co/bartowski/labradorite-13b-exl2 > >@NeuralNovel released several, but I'm a sucker for DPO models, and this one uses their Neural-DPO dataset: https://huggingface.co/bartowski/Senzu-7B-v0.1-DPO-exl2 > >Locutusque, who has been making the Hercules dataset, released a preview of "Hyperion": https://huggingface.co/bartowski/hyperion-medium-preview-exl2 > >@AjinkyaBawase gave an update to his coding models with code-290k based on deepseek 6.7: https://huggingface.co/bartowski/Code-290k-6.7B-Instruct-exl2 > >@Weyaxi followed up on the success of Einstein v3 with, you guessed it, v4: https://huggingface.co/bartowski/Einstein-v4-7B-exl2 > >@WenhuChen with TIGER lab released StructLM in 3 sizes for structured knowledge grounding tasks: https://huggingface.co/bartowski/StructLM-7B-exl2 > >and that's just the highlights from this past week! If you'd like to see your model quantized and I haven't noticed it somehow, feel free to reach out :)

-

[Paper] The Era of 1-bit LLMs: All Large Language Models are in 1.58 Bits

huggingface.co Paper page - The Era of 1-bit LLMs: All Large Language Models are in 1.58 BitsJoin the discussion on this paper page

From the abstract: "Recent research, such as BitNet, is paving the way for a new era of 1-bit Large Language Models (LLMs). In this work, we introduce a 1-bit LLM variant, namely BitNet b1.58, in which every single parameter (or weight) of the LLM is ternary {-1, 0, 1}."

Would allow larger models with limited resources. However, this isn't a quantization method you can convert models to after the fact, Seems models need to be trained from scratch this way, and to this point they only went as far as 3B parameters. The paper isn't that long and seems they didn't release the models. It builds on the BitNet paper from October 2023.

"the matrix multiplication of BitNet only involves integer addition, which saves orders of energy cost for LLMs." (no floating point matrix multiplication necessary)

"1-bit LLMs have a much lower memory footprint from both a capacity and bandwidth standpoint"

Edit: Update: additional FAQ published

-

This is an interesting demo, but it has some drawbacks I can already see:

- It's Windows only (maybe Win11 only, the documentation isn't clear)

- It only works with RTX 30 series and up

- It's closed source, so you have no idea if they're uploading your data somewhere

The concept is great, having an LLM to sort through your local files and help you find stuff, but it seems really limited.

I think you could get the same functionality(and more) by writing an API for text-gen-webui.

more info here: https://videocardz.com/newz/nvidia-unveils-chat-with-rtx-ai-chatbot-powered-locally-by-geforce-rtx-30-40-gpus

- venturebeat.com Meet ‘Smaug-72B’: The new king of open-source AI

Abacus AI has released "Smaug-72B," a new open-source AI model that outperforms GPT-3.5 and Mistral Medium on the Hugging Face Open LLM leaderboard.

-

itsme2417/PolyMind: A multimodal, function calling powered LLM webui.

github.com GitHub - itsme2417/PolyMind: A multimodal, function calling powered LLM webui.A multimodal, function calling powered LLM webui. - GitHub - itsme2417/PolyMind: A multimodal, function calling powered LLM webui.

> PolyMind is a multimodal, function calling powered LLM webui. It's designed to be used with Mixtral 8x7B + TabbyAPI and offers a wide range of features including:

> Internet searching with DuckDuckGo and web scraping capabilities. > > Image generation using comfyui. > > Image input with sharegpt4v (Over llama.cpp's server)/moondream on CPU, OCR, and Yolo. > > Port scanning with nmap. > > Wolfram Alpha integration. > > A Python interpreter. > > RAG with semantic search for PDF and miscellaneous text files. > > Plugin system to easily add extra functions that are able to be called by the model. 90% of the web parts (HTML, JS, CSS, and Flask) are written entirely by Mixtral.

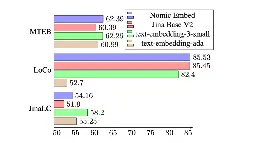

- blog.nomic.ai Introducing Nomic Embed: A Truly Open Embedding Model

Nomic releases a 8192 Sequence Length Text Embedder that outperforms OpenAI text-embedding-ada-002 and text-embedding-v3-small.

Open source

Open data

Open training code

Fully reproducible and auditable

Pretty interesting stuff for embeddings, I'm going to try it for my RAG pipeline when I get a chance, I've not had as much success as I was hoping, maybe this english-focused one will help

-

Uncensored Mixtral 8x7B with 4 GB of VRAM

I have a 1650 Super with 4GB of VRAM and 16GB of system RAM. I'm able to get roughly 2.7 tokens per second using the Q2 quantized dolphin 2.5 model from here (n_gpu_layers = 5). A dolphin 2.7 model exists, but I haven't tried it yet.

The Q2 model produces lower-quality output. Here are a few responses using the prompts from here.

Prompt 1

> Linda has 3 sisters > Paul has 4 sisters > Can Linda and Paul be siblings?

> Yes, they can be siblings. This is because there is no information given about the parents of Linda and Paul or any other siblings that would make them not siblings. Hence it is still possible for them to be siblings.

Prompt 2

> Björn is 3 years older than Freya > Freya is Björn’s sister. > Björn is twice Freya’s age. > How old is Freya? How old is Björn?

> Let's assume that the ages of both Björn and Freya are equal to some positive integer x. Then we can write two equations based on the given information: > 1. Björn = x + 3 > 2. Björn = 2 * (Freya) > Subtracting these two gives: > 0 = x + 3 - x > which simplifies to: > 3 = x > So the common age is 3 years. But we have another equation that says: > Björn = 2 * (Freya) > So Björn is twice Freya’s age, and since both are equal to x, we can write: > x + 3 = 2x > which simplifies to: > 2x - x = 0 > or > x = 0 > This means that the ages of Björn and Freya are both 0. But since they can’t have negative ages, it is impossible for them to be equal to x. So there is no solution for this problem.

Prompt 3

> What is bigger than an scorpion, and has exactly half as many letters?

> The word "SPECTRUM" has exactly half as many letters as the word "SCORPION".

Prompt 4

> I’m at Point Reyes standing at the edge of an oceanside cliff. What direction should i face to see the sun hit the ocean? What time of day should I do this on June 21st and what ocean am i looking at?

> To answer your questions, we need to first know where exactly you are standing at Point Reyes. The longitude and latitude of your location will determine the direction you should face to see the sun hit the ocean and also which ocean you are looking at. Can you provide these coordinates?

Prompt 5

> What is 5 to the power of 8 minus 6 to the power of 8? Double it and add two to the power of 3?

> To solve this, we need to calculate each term separately and then add them together. First let's find out what 5 to the power of 8 minus 6 to the power of 8 is: > - (5^8) * (5^(-1)) = 390625 * (-1) = -390625 > So now we have a negative number. Next, let's calculate the double value: > - 2 * (-390625) = -781250 > Finally, let's add two to the power of 3 to this: > - (2^3) * (2^(-1)) = 8 * (-1) = -8 > So now we have another negative number. Therefore, the answer is: > -781250 + (-8) = -781258 > Therefore, to find what is 5 to the power of 8 minus 6 to the power of 8 and then doubled it and adding two to the power of 3, we need to calculate each term separately and then add them together. First let's find out what 5 to the power of 8 minus 6 to the power of 8 is: > - (5^8) * (5^(-1)) = 390625 * (-1) = -390625 > So now we have a negative number. Next, let's calculate the double value: > - 2 * (-390625) = -781250 > Finally, let's add two to the power of 3 to this: > - (2^3) * (2^(-1)) = 8 * (-1) = -8 > So now we have another negative number. Therefore, the answer is: > -781250 + (-8) = -781258

Prompt 6

> Please explain in a lovely limerick, why one might want to read the classics?

> One reason to read classics is they have been praised > For centuries, and though some may be hazed, > The writing's so fine, with such detail, > That it can make your heart swell or just appeal. > > Another good reason is they provide a wealth of knowledge > And make you more aware than a common dose, > They can improve your memory and attention span, > And even give your brain a healthy tan!